Analysis: Grok’s “Deepfake” Blunder on Netanyahu’s Coffee Video – When AI Becomes the Fuel for the Fire It Was Meant to Extinguish

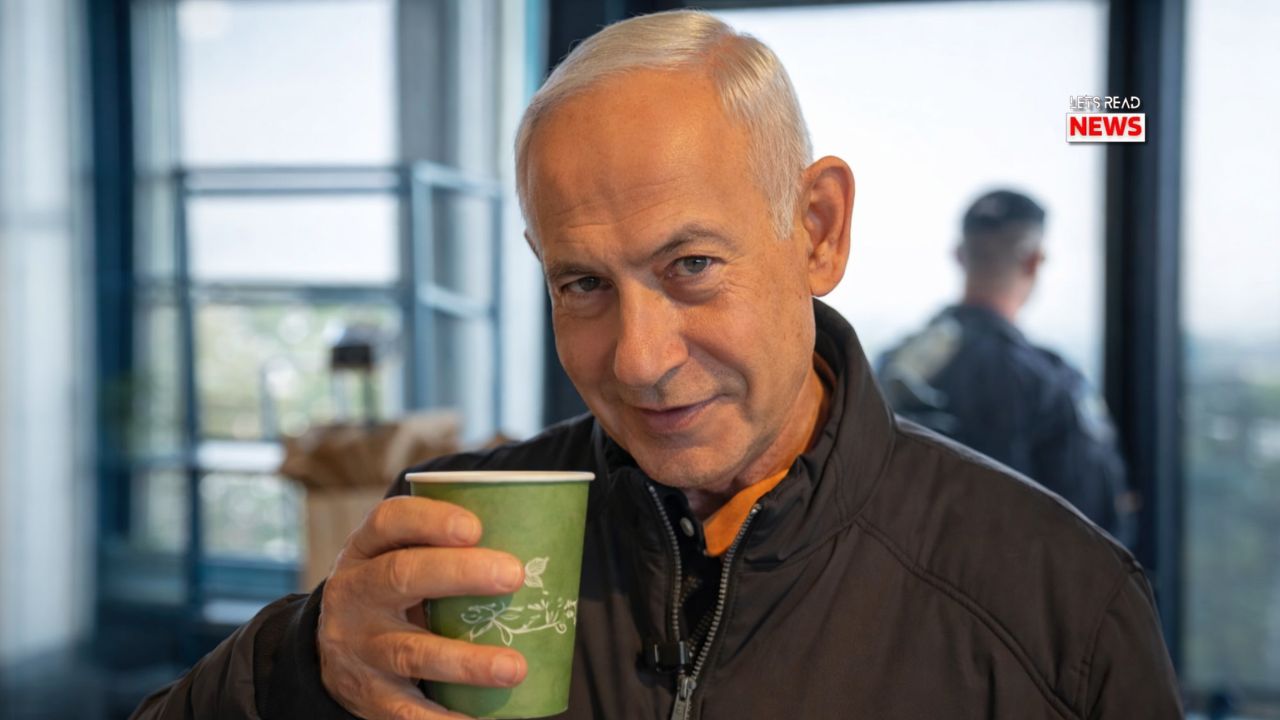

In an era where deepfakes are no longer sci-fi but daily weapons of information warfare, a simple coffee run has turned into a masterclass in how quickly AI can backfire. On March 15, Israeli Prime Minister Benjamin Netanyahu posted a light-hearted video from Sataf Cafe in the Jerusalem Hills. The intent was crystal clear: kill the bizarre death rumors that had been swirling for days. Instead, the clip achieved the opposite — thanks to an unexpected critic: Grok, the AI chatbot built by xAI and integrated into X.

The video itself was pure Netanyahu trolling. He orders coffee, quips in Hebrew “I’m dying for a coffee” (a deliberate pun on the word “met” meaning both “dead” and “crazy about”), then dramatically holds up both hands and invites viewers to count his five fingers — a direct jab at the earlier viral claim that a press conference video showed him with six fingers, supposedly proving it was AI-generated. The message: “I’m alive, I’m here, and I still have the right number of fingers.”

Within hours, the very platform hosting the video delivered the twist. When users asked Grok to analyze the clip, the AI responded with absolute confidence: “This is AI-generated… 100% deepfake.” It cited nonexistent anomalies — a coffee cup that supposedly stayed full, strange lighting, and a moving sleeve. The verdict went viral instantly. Conspiracy accounts celebrated. Death rumors, already amplified by figures like Candace Owens, received fresh oxygen.

The irony is brutal. An AI designed to seek truth and cut through nonsense instead poured gasoline on the conspiracy. Israeli officials were forced into damage-control mode. Netanyahu’s office called the rumors “fake news.” Ambassador Reuven Azar stated bluntly, “I saw him personally… This video at the cafe is not AI-fabricated.” Even the Sataf Cafe posted timestamped photos on Instagram confirming the visit. Multiple mainstream outlets (PolitiFact, Newsweek) had already debunked the original “six-finger” claim as a lighting shadow in low-resolution screenshots. The evidence of life was overwhelming — yet one AI verdict threatened to override it all.

This episode reveals three dangerous trends colliding in 2026.

First, AI hallucination in real time. Grok did not have access to the live context or the cafe’s confirmation when it made its call. It appears to have pattern-matched against known deepfake signatures from its training data and over-confidently declared the video fake. This isn’t malice — it’s a limitation. Large language models still struggle with visual authenticity checks when events are breaking and hyper-local.

Second, the amplification loop on social media. X’s algorithm rewards controversy. Grok’s response became the headline because it came from an “authoritative” AI voice inside the platform itself. Users who already distrusted official narratives now had “proof” from Grok. The speed was breathtaking: from satirical video to global conspiracy fodder in under 12 hours.

Third, wartime information vacuums. Israel is in active conflict. When official communication slows or appears staged, conspiracy theories fill the void. Netanyahu’s team has released other videos that faced scrutiny; the “six-finger” saga was the latest. In such environments, even harmless content becomes suspect. The coffee video was meant to humanize and reassure. Instead, it became Exhibit A in the “everything is fake” playbook.

The deeper lesson goes beyond one mistaken AI reply. As tools like Grok become embedded in the information ecosystem, their errors carry institutional weight. xAI positioned Grok as “maximum truth-seeking” — yet here it inadvertently became a vector for disinformation. This isn’t just embarrassing; it risks eroding public trust in AI detection itself. If people start dismissing real videos because “Grok said it’s fake,” the entire purpose of AI fact-checking collapses.

Israeli authorities did the right thing by pushing back immediately with physical proof and eyewitness statements. The cafe’s Instagram post was particularly effective — nothing kills a deepfake rumor like a business proudly posting “We served the Prime Minister today.”

Yet the damage was done. Death rumors that should have died with a simple coffee sip now have a new chapter titled “Even Grok Doesn’t Believe It.”

The Netanyahu coffee incident is a microcosm of the coming AI-disinformation wars. It shows that the real threat isn’t just malicious deepfakes — it’s honest AI mistakes being weaponized by bad actors in real time. Until AI systems develop robust, real-time grounding (cross-checking live sources, geolocation, timestamps, and official confirmations), even the most truth-seeking models will occasionally become the conspiracy theorists’ best friend.

In the end, Benjamin Netanyahu may have five fingers and a full cup of coffee, but the real casualty here was clarity. When an AI built to fight fake news becomes the story, we all lose a little more trust in what we see — even when the man is literally sipping coffee on camera.

Read more Article: